Meredith A. Whitley1, Adam Fraser2, Oliver Dudfield3, Paula Yarrow4, Nicola Van der Merwe4

1 Adelphi University, USA

2 Laureus Sport for Good Foundation, USA

3 Commonwealth Secretariat, UK

4 Waves For Change, South Africa

Citation:

Whitley, M. A., Fraser, A., Dudfield, O., Yarrow, P., & Van der Merwe, N. (2020). “Insights on the funding landscape for monitoring, evaluation, and research in sport for development.” Journal of Sport for Development, 8(14), 21-35. Retrieved from https://jsfd.org/

ABSTRACT

The international community’s increasing attention to sport in policy decisions, along with growing programmatic and scholarship activity, demonstrate the need for data that facilitates evidence-informed decision making by organizations, policy actors, and funders within the Sport for Development (SfD) field. To achieve this, there is a need for effective and sustainable investment, resource mobilization, and funding streams that support meaningful and rigorous monitoring, evaluation, and research. In this paper, the SfD funding landscape as it pertains to monitoring, evaluation, and research is critically appraised by a diverse writing team. This appraisal is informed by our experiences as stakeholders, along with findings from two recent systematic reviews and knowledge accumulated from SfD literature. Various topics are discussed (e.g., intervention theories, external frameworks, targeted funding, collective impact, transparent funding climate), with the conclusion that all actors must support the pursuit of participatory, rigorous, process-centered (but outcome-aware) monitoring, evaluation, and research that aims to enhance our understanding of SfD. Ultimately, this monitoring, evaluation, and research should improve both policy and intervention design and implementation while also defining and testing more realistic, contextually relevant, culturally aware outcomes and impacts.

INTRODUCTION

Within the Sport for Development (SfD) field, there is a need for effective and sustainable investment, resource mobilization, and funding streams to support meaningful and rigorous monitoring, evaluation, and research. This can enhance the shared evidence base, resulting in evidence-informed decision making by organizations, policy actors, and funders. The priority areas identified in the Kazan Action Plan (Ministers and Senior Officials Responsible for Physical Education and Sport [MINEPS], 2017), the United Nations Action Plan on Sport for Development and Peace (United Nations General Assembly [UN GA] Resolution 73/24, 2018), and Commonwealth Sports Ministers Meeting (2018) support this approach. However, these areas are often overlooked, with academic writing more frequently exploring the funding landscape in the context of international cooperation, intervention funding, and ownership of research and evaluative processes (Coalter, 2013; Harris & Adams, 2016; Jeanes & Lindsey, 2014; Levermore & Beacom, 2014; Sherry et al., 2017). In this paper, there is an integration between clear policy direction on enhanced statistics and (scaled) data on sport, less “traditional” participatory methods and methodologies, and enhanced monitoring, evaluation, and research in SfD. This integration has not been a feature of previous programmatic-focused monitoring, evaluation, and research. Additionally, there have been calls for more comprehensive, critical, productive dialogue regarding the funding landscape for monitoring, evaluation, and research in SfD among various stakeholders. In particular, Levermore and Beacom (2014) suggested that

Future research and writing on the subject can only be meaningful if it engages more effectively with all stakeholders involved with the development process. This means listening to the voices of communities where sports-based interventions are being considered, as well as the views of policymakers and funding bodies working in Northern and Southern policy arenas. (p. 134)

This paper engages in this dialogue through a writing team of various stakeholders (i.e., a scholar, a global funder CEO, an international public official, a development director, and a program evaluator and researcher), enabling a more nuanced discussion of monitoring, evaluation, and research that avoids the either–or perspective on “traditional” vs. less “traditional” approaches. In this paper, we consider questions of both rigor and scale, exploring various approaches and related concerns while outlining ways forward that align with or advance best practices. Our diverse experiences and expertise inform our discussion below, although we recognize the limitations of drawing on our own experiences and organizations. These insights are also informed by knowledge accumulated from the SfD literature and findings from two recent systematic reviews assessing the quality of evidence reported for SfD interventions (Darnell et al., 2019; Whitley, Massey, Camiré, Blom et al., 2019; Whitley, Massey, Camiré, Boutet, & Borbee, 2019). This literature is referenced below, where pertinent. Given concerns about academic writing in the SfD sector frequently restricted to those who can access journals behind paywalls (Gardam, Giles, & Hayhurst, 2017; Whitley, Farrell et al., 2019), this paper is also intended to share these insights (both new and previously cited) in one resource that is open access and accessible online, thereby broadening the audience who can engage with this dialogue.

Monitoring, evaluation, research, and related terms (e.g., accountability, impact) are defined and used differently within and beyond SfD, requiring clarity on how these terms will be used in this paper. These operational definitions are informed by content shared on the International Platform on Sport and Development (2018), among other sources (Oxfam GB, 2020; Patton, 2008; Poister, 2015). Monitoring refers to systematic, ongoing collection and review of information that documents progress against intervention plans and toward intervention objectives. When monitoring is integrated meaningfully into intervention design and daily management, learning processes unfold more rapidly, resulting in intervention adaptations that optimize impact. Data acquired through monitoring can be part of evaluation efforts, but evaluations should extend ongoing monitoring activities through more in-depth, objective assessments at specific time points. Evaluations should enhance understanding of the intervention’s relevance, effectiveness, efficiency, impact, and sustainability. Ultimately, both monitoring and evaluation (M&E) can be used to document outputs and outcomes, influence learning, decision making, and iterative planning processes, strengthen accountability, and/or demonstrate impact. A recent trend in SfD is MEL, with the “L” representing the internal learning that should result from M&E processes. Conversely, research is intended to test broader theories and produce generalizable knowledge, with questions more frequently driven by scholars (rather than intervention stakeholders) and value determined by contribution to knowledge (rather than utility of knowledge). We recognize that evaluation and research are not mutually exclusive, and there are arguments that evaluation is a subset of research (and vice versa). However, in this paper, evaluation will refer to impact of/on specific SfD policies and interventions, while research will refer to impact of/on the overall SfD field.

We now present a critical, reflexive dialogue regarding the funding landscape for monitoring, evaluation, and research in SfD among various stakeholders, beginning with a discussion of intervention theories and external frameworks, targeted funding for SfD monitoring, evaluation, and research, and collective monitoring, evaluation, and research efforts. These sections are followed by an exploration of MEL personnel, research collaborations, and transparency in the funding climate.

THEORIES AND FRAMEWORKS

Our assessment begins with the need for an intentional, aligned approach to intervention planning, implementation, and monitoring, evaluation, and research. There is a need to shift from pursuing evidence through efforts that are externally defined (i.e., top-down), generalized, exclusionary, stabilizing, outcome centered, and summative to those that prioritize understanding through participatory (i.e., bottom-up), localized, collaborative, destabilizing, process centered, and formative steps (Hayhurst, 2016; Kay et al., 2016; Nicholls et al., 2011). Additionally, there are ongoing debates in the SfD field regarding theoretical frameworks, philosophy of knowledge production, and research traditions. For example, scholars question positivist forms of evidence, with concerns that it may reinforce systems of hegemony and oppression while suppressing local input and knowledge production (Kay et al., 2016; Nicholls et al., 2011). Others (notably Coalter, 2013) portray these as critiques from liberationist researchers eschewing attempts to define and measure impacts and outcomes. Following in the footsteps of Massey and Whitley (2019), “rather than lay blanket critiques across different research paradigms and epistemologies, there is a need to discuss higher levels of sophistication in both instrumental/positivist (i.e., quantitative) and descriptive/critical (i.e., qualitative) [SfD] research” (p. 177). We agree with this sentiment.

Funders can support these efforts by welcoming different theories and methodologies that are rigorous, culturally relevant, theoretically diverse, and methodologically encompassing (Massey & Whitley, 2019), with a shift toward understanding rather than evidence. This learning-centered approach was embraced by the Inter-American Development Bank (IDB) in their effort to better understand how, why, and in which conditions SfD may influence development across IDB-sponsored initiatives in 18 Latin American and Caribbean countries (Jaitman & Scartascini, 2017). This localized, culturally specific, and developmental approach identified these future focus areas: (a) increasing physical activity levels; (b) improving data collection and evaluation, including potential harm from SfD interventions; and (c) understanding the “spill-over” of investment into other policy areas. The importance of localized, culturally specific M&E is also prioritized in a multistakeholder international initiative to monitor and evaluate the contribution of sport to the Sustainable Development Goals (SDGs). This initiative underscores that “effective MEL is likely to be different within each regional, national, or organizational context, (and as such) there is a need to focus on supporting the institutional arrangements required in organizational contexts to operationalize monitoring systems (Commonwealth Secretariat, 2019a, p.48-49). This has been operationalized in Jamaica through the development of an M&E system on strategic national priorities to be pursued in the area of sport by the national government and civil society stakeholders; this system is wholly aligned to the Vision 2030 Jamaica National Development Plan (NDP) and Medium Term Socio-Economic Policy Framework (MTF) of the country (Jamaica Information Service, 2019).

Additionally, the trend toward separating frontline staff from monitoring, evaluation, and research findings limits the ability to truly engage all in a participative, collaborative, iterative, process-oriented approach that leads to enhanced motivation, meaningful learning, and shared decision making (Kaufman et al., 2013). It also reduces the likelihood that the findings will be locally driven, culturally specific, and developmental (Kay et al., 2016). One example that bucks this trend is Waves for Change (W4C), which embeds Peer Youth Researchers (PYRs) in each site. PYRs (18-26 years) have at least one year of experience as W4C coaches and receive training on simple, largely qualitative techniques. They explore intervention fidelity, facilitate focus group discussions, and document stories of change through photos, voice notes, short videos, etc. They interpret and share results with their on-ground teams, enabling all to meaningfully engage with and learn from MEL processes (i.e., feedback loops) (Kaufman et al., 2013). While fulfilling the PYR role, they continue working as senior coaches, facilitating a collaborative, locally driven, process-oriented approach to evaluation. Another example is from Soccer Without Borders, which has “game-ified” their M&E practices to motivate and engage their staff in consistent, complete data collection through the “M&E World Cup” (M. Connor, personal communication, February 19, 2020). This ongoing competition runs the entirety of the school year to ensure all coaches at all sites are engaged in the M&E process from baseline to endline. Coaches and intervention leaders are then guided through a “data navigation” process to ensure the data are utilized to make intervention improvements and highlight strengths.

While we agree that pursuit of understanding via these processes is necessary to ensure monitoring, evaluation, and research is meaningful to all actors, outcomes-based research should still be valued. However, instead of using performance indicators or other benchmarks that, at times, disempower organizations by prioritizing outputs (e.g., participant numbers), constructs (e.g., self-esteem), data (e.g., quantitative metrics), and frameworks (e.g., evaluation frameworks from Northern settings) preferred by external stakeholders (Coalter & Taylor, 2010; Harris & Adams, 2016; Henne, 2017; Kay et al., 2016; Svensson & Hambrick, 2019), we encourage stakeholders to outline, adopt, and test their own intervention theories. This can facilitate purposeful and thoughtful measurement of relevant outcomes and impacts, along with the inputs and processes that may (not) lead to these outcomes and impacts. Past critiques within and beyond SfD have centered on the perception of intervention theories as static products required by organizations’ boards and funders rather than sought by organizations or communities themselves, particularly in the Global South (Harris & Adams, 2016; James, 2011). However, if they are embraced as evolving products and processes that are participative, collaborative, iterative, and developmental, they can unlock the flexibility necessary for practitioners to cultivate dynamic, responsive, effective interventions (Haudenhuyse et al., 2013; Nicholls et al., 2011; Rogers, 2008). For example, if these theories are developed, monitored, and evaluated by those delivering interventions, there is the potential to better understand how change happens in different settings. Additionally, the assumptions in these intervention theories can be tested, including the scale of the inputs required to deliver the envisaged/claimed outcomes and impacts. The latter is particularly important in informing policy and the scale of investment required to ensure the contribution of SfD to envisaged outcomes is realized.

To do this, funders are encouraged to set expectations (with associated funding and support) for organizations to outline, adopt, and test intervention theories or simply assess intervention quality, fidelity, and utility, along with the critical factors that may impact intervention efficacy. Ideally, this means supporting organizations in setting up their own MEL frameworks and/or utilizing key public policy frameworks (particularly those at a domestic level) rather than requiring specific approaches (e.g., logic models, logframes) that may prioritize funder needs (Kay, 2012). This responds to calls for truly collaborative MEL processes in mutually respectful climates that deconstruct inequitable power dynamics and support the coproduction of knowledge among all involved (e.g., practitioners, researchers, donors) (Hayhurst, 2016; Kay et al., 2016; Nicholls et al., 2011). This could be achieved during the funding start-up period, with remote or in-person guidance provided for organizations to establish their own framework (or adopt/adapt an existing framework) that enables accountability to the funder (e.g., reporting requirements), aligns with domestic policy priorities, and develops processes that test assumptions, encourage learning, and examine impact of scaling (if expected) on intervention quality, fidelity, and utility. Engaging in these conversations early on, before targets are agreed on and reports are developed, can help organizations avoid mission drift and ensure ownership of their framework. It is also important to note that funders, whether governmental or nongovernmental, should outline, adopt, and test their own institutional intervention theories that relate to the portfolio of their partnerships or SfD investments toward a particular policy or development objective. A pertinent example is work by the United Nations Development Programme (UNDP, 2017) in Brazil, which analyzed the contribution of sports and physical activity toward good health, sociability, cognition, productivity, and quality of life within the country. Key principles emerged from this data to guide interventions aimed at enhancing and refining the engagement of people in sport and physical activity and its associated impact. These include: (a) shared responsibility to enhance participation between the population, the public sector, private initiatives, and the third sector; (b) the importance of developing more active school environments; (c) a need to address inequality of access to sport and physical activity; and (d) a requirement to broaden the understanding of sport and physical activity as a tool for improving health in the country.

TARGETED FUNDING

Sustainable and diverse investments, funding streams, and resource mobilization can be created specifically for monitoring, evaluation, and research, which aligns with recommendations in the Kazan Action Plan (MINEPS, 2017) and the United Nations Action Plan on Sport for Development and Peace (UN GA Resolution 73/24, 2018). For example, the Erasmus+ Programme of the European Union funded the Sport & Society Research Unit (2020) at Vrije Universiteit Brussel to develop a user-friendly M&E manual that helps SfD organizations aiming to increase the level of employability of their adolescent participants. This project is a collaboration with a number of SfD partners, including Street League, Magic Bus, Oltalom Sport Association, and Sport 4 Life UK. Additionally, the International Platform on Sport and Development (sportanddev), in partnership with the Japan Sport Council, is developing a guidebook/toolkit on how to apply sport as a developmental tool, which will include a focus on intervention planning, theory, management, and M&E (B. Sanders, personal communication, February 20, 2020). Targeted funding could also support: (a) research examining questions about intervention efficacy (e.g., rigorous experimental research designs) and (b) research examining questions about beneficiaries, moderating variables, etc. (e.g., alternative/flexible research designs that are still rigorous). Funders should also consider removing/minimizing restrictions in the use of these funds, along with welcoming research applications developed by frontline delivery organizations, with partnerships developed that embrace local and (if relevant) global priorities. For example, W4C (a South African nonprofit organization) partnered with The New School (a New York university) for research examining the physiological indicators of improved mental health among participants. This research is funded by Laureus Sport for Good (an international NGO) and advised by the University of Cape Town (a local university). This study was not designed in the funder’s boardroom or at a university but on the ground in South Africa. Setting research objectives at the local level removes some elements of top-down, Global North performance indicators defining success or failure (Henne, 2017). This local evidence is particularly necessary if SfD is to have greater success in accessing local (federal, state, and municipal) government funding for sport that is directed toward delivering nonsport outcomes. Examples of public funding delivered at scale, and yet still driven by local belief in impact, are Programa Segundo Tempo in Brazil (Reverdito et al., 2016) and Sport England (2020).

COLLECTIVE EFFORTS

Organizations can also engage in collective monitoring, evaluation, and research efforts, with the Kazan Action Plan (MINEPS, 2017) and the United Nations Action Plan on Sport for Development and Peace (UN GA Resolution 73/24, 2018) both calling for improved, coordinated, collaborative monitoring, evaluation, and research efforts. This begins with the development of common indicators for SfD, such as those currently under development in response to Action 2 in the Kazan Action Plan (Commonwealth Secretariat, 2019a), along with other common measurement approaches (e.g., the UK Sport for Development Coalition). The Philadelphia Youth Sports Collaborative (2020) is working to establish shared definitions and methods for collecting, analyzing, and disseminating data on youth demographics, participation, progress, and outcomes for the SfD field. Svensson and Hambrick (2019) describe an SfD organization hosting “an international gathering of similar organizations from across the world to meet and develop shared mental health indicators” (p. 546). One caveat to this approach is the potential for organizations to use irrelevant indicators simply because of the need to collect data for reporting, particularly when externally driven quantitative metrics may supersede alternative forms of evidence driven by grassroots practitioners (Henne, 2017; Kay et al., 2016). Another concern is that indicators tend to be biased in favor of those in power (e.g., Global North vs. Global South actors; funders vs. practitioners) and tend to negate the diversity of conditions in different contexts (Henne, 2017; Kay et al., 2016). To minimize these concerns, capacity building efforts should help organizations determine if (and when) shared indicators can drive their own learning and decision making, with funders and policy actors supporting organizations’ decisions (and thereby disrupting traditional power dynamics that typically subjugate knowledge) (Nicholls et al., 2011). The establishment of an open-ended working group structure to support a diverse group of stakeholders in monitoring and evaluating the contribution of sport-based policy and programming to the SDGs in their specific contexts is an example of this recommendation in practice (Commonwealth Secretariat, 2019b).

Not only do these steps ease organizations’ efforts to improve the quality of their monitoring, evaluation, and research, but they also allow for greater benchmarking and cross-comparison (Svensson & Hambrick, 2019), along with the potential to demonstrate collective impact and engage in systems thinking. Funders should support these efforts, particularly since perceived competition over funds may discourage collaboration (Lindsey, 2013). This can begin with supporting common indicators and events that promote coordinated and collaborative efforts, rather than requiring organizations to respond to donor-defined targets, M&E systems, and so on. A prime example of a collective impact strategy is in Brazil with Women Win and their partner, Empodera (M. Schweickart, personal communication, February 20, 2020). They are creating a coalition of institutions that collectively seek the empowerment of girls and women to and through sport, with partners ranging from traditional sport (e.g., federations), SfD, and nonsport partners. Coalition participants will collectively agree on how to measure and report progress that will drive learning and improvement, with the first step already taken through a co-creation workshop in which a short list of common indicators were identified by coalition participants.

This type of collaboration can unlock funding opportunities within and beyond SfD through an expanding, shared, and rigorous evidence base (Svensson & Hambrick, 2019). However, this collective approach should not minimize local or national perspectives in favor of predetermined criteria parachuted into diverse contexts and cultures (Giulianotti et al., 2016; Hayhurst, 2016; Henne, 2017; Kay et al., 2016; Nicholls et al., 2011). Instead, coordinated, collaborative monitoring, evaluation, and research efforts should attend to “bottom-up,” contextually relevant, and culturally attuned approaches. For example, Laureus Sport for Good’s “Model City” collective impact approach in New Orleans supports a group of SfD organizations working within a shared, locally developed theory of change, with external funding secured for the collective. This also highlights the value of domestic funders and policy actors (particularly within the Global South), compared to the past “dominance of Global North ideologies, agendas, and input within many [SfD] interventions” (Straume, 2019, p. 54).

In making this recommendation, it is important to recognize that funders, whether public authorities or nongovernmental, are often required to aggregate data to justify and report on the combined scale of investment in SfD. In response, funders establishing a common syntax to categorize the “type” and “level” of change that a beneficiary might experience and organizing the aggregation of varied programmatic data accordingly is recommended ahead of imposing common logic models or logframes. Drawing on the framework proposed by the London Benchmarking Group (Corporate Citizenship, 2018), this approach has been recommended by the Commonwealth Secretariat as they coordinate international collaboration to deliver on Action 2 of the Kazan Action Plan (MINEPS, 2017).

MONITORING, EVALUATION, AND LEARNING PERSONNEL

Research lines and methodologies in SfD have not advanced as they should have over the past 20 years, with the systematic reviews confirming longstanding fears that the rigor in SfD research is lacking (Massey & Whitley, 2019; Whitley, Massey, Camiré, Blom et al., 2019; Whitley, Massey, Camiré, Boutet, & Borbee, 2019). This is partially attributed to a lack of funding that would provide the training, resources, and time required for rigorous monitoring, evaluation, and research (Kaufman et al., 2013). More specifically, the majority of funds are tagged for specific project delivery costs, rather than untagged (e.g., no/minimal restrictive conditions) funding overhead costs that include monitoring, evaluation, and research. Without this support, organizations struggle to hire and retain qualified, experienced staff for monitoring, evaluation, and research roles (Jeanes & Lindsey, 2014). MEL personnel and researchers cannot address deficiencies in their training and education in this area (Kaufman et al., 2013; Whitley, Farrell et al., 2019) and are also pushed toward “low cost” work, which often excludes: (a) experimental, longitudinal, multisite, and multigroup designs; (b) valid, reliable, culturally relevant, and behaviorally based measures (e.g., direct measures of behavior change); and (c) deeply contextualized research (e.g., within local social, cultural, and political climates). Additionally, monitoring, evaluation, and research is frequently driven by “the need to demonstrate accountability,” with organizations sometimes faced with “non-negotiable requirements to collect data in forms specified by external partners” rarely “designed on the basis of local organizational culture, or with due consideration of basic practical issues such as language competence, administrative experience, IT skills and indeed access to electricity for IT systems” (Kay, 2012, p. 891). This certainly presents complications for organizations in conducting rigorous monitoring, evaluation, and research that is meaningful to all stakeholders, including the organizations themselves, and continues a legacy of neocolonial practices and power imbalances that undermine the autonomy, agency, and self-determination of local organizations (Hayhurst, 2016; Henne, 2017; Kay et al., 2016; Nicholls et al., 2011).

To address these concerns, traditional funding streams must be activated for training and education, although attention must be directed toward avoiding the perpetuation of neocolonial and inequitable practices (Welty Peachey et al., 2018; Whitley, Farrell et al., 2019). Just as SfD organizations have been critiqued for relying on professionals and volunteers from the Global North for intervention implementation in Global South settings (Giulianotti et al., 2016), concerns should be raised about MEL personnel and researchers who may approach monitoring, evaluation, and research in a manner that is not contextually or culturally relevant (Kay et al., 2016). This is also applicable for the tools being used for data collection, entry, and analysis, with a need for more efficiency, efficacy, contextual relevance, and cultural awareness (Kaufman et al., 2013). In response, Laureus Sport for Good has established learning communities facilitated by experienced MEL practitioners with extensive field experience, with SfD practitioners learning together about (in)effective, (ir)relevant monitoring, evaluation, and research practices. W4C hosts and participates in communities of practice at local and international levels, while streetfootballworld (2020b) facilitates forums in which key players share knowledge and exchange ideas (e.g., MEL). On a national level, Laureus USA partners with Algorhythm to support organizations’ MEL efforts, including the provision of a user-friendly platform (and related support) that facilitates pre/post program evaluation, along with developing the capacity of program staff to “make meaning” of the data. Another novel approach is funding fellowships and research grants for graduate students that can raise the level of trained MEL personnel and researchers active in the SfD field. For example, the Sport-Based Youth Development Fellowship at Adelphi University has provided students with access to a tuition-free Master’s degree, with specific training and education within SfD (including monitoring, evaluation, and research) (Whitley et al., 2017). Another approach is being led by the International Platform on Sport and Development (sportanddev), in partnership with the Commonwealth Secretariat and the Australian government, to develop a massive open online course (MOOC) on SfD. The MOOC will promote learning across the SfD field, including particular content on monitoring, evaluation, and research (B. Sanders, personal communication, February 20, 2020).

RESEARCH COLLABORATIONS

Another avenue for producing meaningful, rigorous research within SfD is through research collaborations between organizations, researchers, research institutions, and policy actors (Kaufman et al., 2013). These partnerships can unlock innovation and learning through the co-production of knowledge (Nicholls et al., 2011), such as economic modeling to determine potential impact if investment is scaled or social return on investment (SROI) studies or cost-benefit analyses exploring the question of when SfD is a “best buy” option (Keane, Hoare, Richards, Bauman, & Bellew, 2019). Although this is an important theme, tokenistic measures such as cost per child can drive organizations to inflate numbers or focus on reach over quality, so these analyses must be implemented sensitively. An innovative approach is the research led by the Global Obesity Prevention Center team that is part of Public Health Informatics Computational and Operations Research (GOPC, 2019). These groups developed a computer simulation model that demonstrates the connection between increased physical activity levels and overweight and obesity prevalence, direct medical costs, years of life, and productivity. Conducting this research without the use of computer modeling would have been prohibitively expensive, if even possible. Another exemplar is from Grassroot Soccer, which has been quite prolific in their partnerships with researchers; a 2018 publication cited 276 research studies since 2005 in over 20 countries (Keyte et al., 2018). These efforts not only supported the development and growth of their organization, but also contributed knowledge to the wider SfD field. The Philadelphia Youth Sports Collaborative provides another example of a meaningful collaboration with a research institution, with Temple University leading the external evaluation of Game On Philly, funded by the Office of Women’s Health within the Department of Health and Human Services through the Youth Engagement in Sports (YES) Initiative (B. Devine, personal communication, February 24, 2020).

A recent survey of actors in the SfD field indicates interest among both practitioners and researchers in developing these partnerships, with expressed hope that these efforts (among others) can enhance monitoring, evaluation, and research within SfD (Whitley, Farrell et al., 2019). However, these partnerships present novel challenges that require additional training, time, and resources (Collison & Marchesseault, 2018; Keyte et al., 2018), with the potential to extend top-down power relations between funders and recipients (Kay, 2012). For example, NGOs may perceive commissioned evaluation and research as confirmation that their knowledge, ability, and reliability are being questioned (Jeanes & Lindsey, 2014; Levermore, 2011). Also, researchers may spend more time with the funders who commissioned the evaluation than the organization (and practitioners) themselves, given geographic barriers, all of which may limit their ability to align the research with organizational priorities (Kay, 2012). Thus, these relationships are far from straightforward, with a need for nuanced support that is rigorous, (frequently) resource intensive, and meaningful to those who will ultimately use the findings (e.g., organizations, not just policy actors or funders).

Addressing these concerns begins with resolving one of the biggest challenges for those seeking to collaborate: identifying potential partners (Keyte et al., 2018). Could a matching program similar to the National Resident Matching Program in the medical field be created for organizations, researchers, and policy actors seeking partnerships? To avoid perpetuating top-down power relations, another step should be careful and comprehensive examination of the geopolitical realities of knowledge production (given social, economic, and geographic inequalities) (Darnell et al., 2018; Nicholls et al., 2011), along with the development of local research capacity such that local knowledge production can unfold (Kay et al., 2016). Governance should also be considered thoughtfully, including the make-up of policy development and research advisory groups, grant assessment committees, and journal editorial boards, with a particular focus on whether there is geographic parity. For example, the Commonwealth Secretariat sets targets and then monitors and reports on the geographic diversity of key advisory panels and expert bodies. It may also be beneficial to seek domestic partnerships among/within these groups, given concerns with neocolonialism and power dynamics are often related to international partnerships. If these partnerships are international, organizations and researchers may be wise to draw on the approach and learning from Lindsey and colleagues’ (2015) example of a North-South partnership of researchers in order to enhance the diversity of perspectives impacting the data collection and knowledge generation process. The League Bilong Laif intervention in Papua New Guinea represents a different partnership approach that includes both local and international constituents, with funding by the Australian government, delivery by the Australian Rugby League, implementation by local staff and volunteers, and evaluation by Australian-based researchers, in partnership with the Papua New Guinea government, Department of Education, and Rugby Football League (Sherry & Schulenkorf, 2016). While there were challenges early on due to uneven power relations, among other factors, there were a number of benefits as well. Sherry and Schulenkorf (2016) cited a need for all stakeholders to be “convinced by, committed to, and comfortable with the overall purpose of the initiative” in order for the intervention to be sustainable and “potentially grow impacts for wider community benefit” (p. 528). On a separate note, it is critical for research partnerships to ensure meaningful learning for all, with the expectation (and related support) for external researchers to disseminate findings to all organization actors through relevant, accessible methods (e.g., workshops with frontline staff, meetings with administrators) and support organizations with ongoing learning and decision making as a result.

TRANSPARENCY

Yet another challenge in the SfD field is the lack of transparency in reporting evaluation and research, including conflicts of interest (e.g., undeclared funders), methodologies pursued (e.g., unclear sampling procedures), and results uncovered (e.g., infrequent null and negative outcomes) (Collison & Marchesseault, 2018; Langer, 2015; Massey & Whitley, 2019; Whitley, Massey, Camiré, Blom et al., 2019; Whitley, Massey, Camiré, Boutet, & Borbee, 2019). There is a need for these norms to be deconstructed, as the SfD field cannot progress if we only have access to rose-tinted research findings. We must strive for a deeper understanding of SfD interventions, rather than simply sharing “what works” (Harris & Adams, 2016). There is also a need for (self-)reflexivity in which the messiness of monitoring, evaluation, and research in SfD is openly and honestly discussed, as the whole field stands to benefit when methods are seen as a process enabling more transparent results (Darnell et al., 2018).

How can funders support this shift? The first step is changing norms and expectations in the funding climate. This may begin with setting/refining expectations that organizations provide sufficient methodological details in their funding proposals and reports to allow for critical appraisal of the methods, methodologies, and evidence. To ensure this does not prematurely close any organizations out of funding opportunities, it may be prudent to provide adequate resources and support for organizations learning to co-create and describe their monitoring, evaluation, and research methods and methodologies. Another shift in the funding culture is the need for clear, proactive, and earnest communication from funders about their commitment to and support for organizations testing their intervention theories and identifying and reporting null and negative findings, along with engagement in constructive conversations in which grantees are encouraged to share both successes and failures (Svensson & Hambrick, 2018). The current competitive funding climate within SfD can discourage NGO staff from “highlighting particular weaknesses” as this may “have a detrimental effect on project funding, even when these limitations are the result of broader structural issues beyond the [organization’s] control” (Jeanes & Lindsey, 2014, p. 209). There needs to be a culture change within the SfD funding landscape. When organizations are able to operate in a funding climate where assessments are expected to demonstrate “what needs to improve” as well as “what works,” the tide will start to shift. This will result in more honest evaluation and scholarship and more authentic partnerships that can address organizations’ needs. Underpinning this is the sometimes unquantifiable issue of trust, with Svensson and Hambrick (2019) noting this as a critical feature for innovative organizations that can result in failure occurring more frequently— and organizational learning resulting from these attempts at innovation. Additionally, these findings can add to the SfD knowledge base in meaningful ways by being constructively critical rather than evangelical of sport’s developmental potential. One innovative example of cultivating trust unfolds through Common Goal (streetfootballworld, 2020a), whose members (i.e., players, managers, businesses, and fans) pledge 1% of their earnings to unite the global football community in advancing the SDGs. These earnings are reallocated in two ways: (a) as unrestricted funds directly to high-impact football NGOs previously vetted by streetfootballworld, utilizing 42 assessment points related to organizational strengths, programmatic strengths, and global cooperation; and (b) to the signature fund, where organizations propose collaborative, high-impact initiatives (e.g., Social Enterprise Initiative, Good Menstrual Hygiene Management, Play Proud) that pool resources, expertise, and commitment, with these collaborating organizations responsible for creating the budget (without a cap), identifying the timeline, and (if funded) overseeing the implementation. Both approaches to funding from Common Goal are founded on trust in the implementing organizations, which is critical for innovation, learning, and growth.

Another challenge to transparency in monitoring, evaluation, and research is the short funding cycles (Lindsey, 2017), with SfD interventions often expected “to demonstrate immediate results” that address donor-defined targets (Sherry et al., 2017, p. 304). Creating a learning-focused environment (Sugden, 2010) in which null and negative findings are viewed as an opportunity for honest, critical reflection over longer funding cycles can lead to meaningful change, rather than a threat to funding. Additionally, more rigorous designs can be pursued through longitudinal designs, creating the opportunity to test different parts of intervention theories while also enabling funders to become invested in organizations’ growth over time, rather than meeting specific benchmarks for their funding portfolio.

This knowledge sharing cannot be limited to organizations, their funders, and other internal stakeholders, as this will limit the shared evidence base and, ultimately, the ability for other organizations, policy actors, and funders to make evidence-informed decisions. Organizations should make their monitoring, evaluation, and research accessible, as recommended by the International Aid Transparency Initiative (2019), in an effort to improve “coordination, accountability, and effectiveness” among governments, multilateral institutions, the private sector, civil society organizations, and others. The UK charity Street League’s impact dashboard is an excellent example of this within the SfD field (publicly available at https://www.streetleague.co.uk/impact). Additionally, funders can support existing/new platforms and networks that contribute to the shared evidence base, as recommended in the Kazan Action Plan (MINEPS, 2017) and the United Nations Action Plan on Sport for Development and Peace (UN GA Resolution 73/24, 2018). This includes the International Platform on Sport and Development (sportanddev), which is supported by Laureus Sport for Good and the Commonwealth Secretariat. Other potential directions could be the creation of funding streams that invite organizations to: (a) study (un)known weaknesses in their programming, with the expectation that these organizations share their findings in public outlets (e.g., a TED Talk-style SfD forum, “The F Word: Learning through Failure” event in London in October 2019); (b) openly share past null/negative findings and the steps taken to address these results; (c) join partnerships in which individuals/organizations with current challenges are matched with those with similar backgrounds; or (d) join think tanks with others currently struggling with monitoring, evaluation, and research.

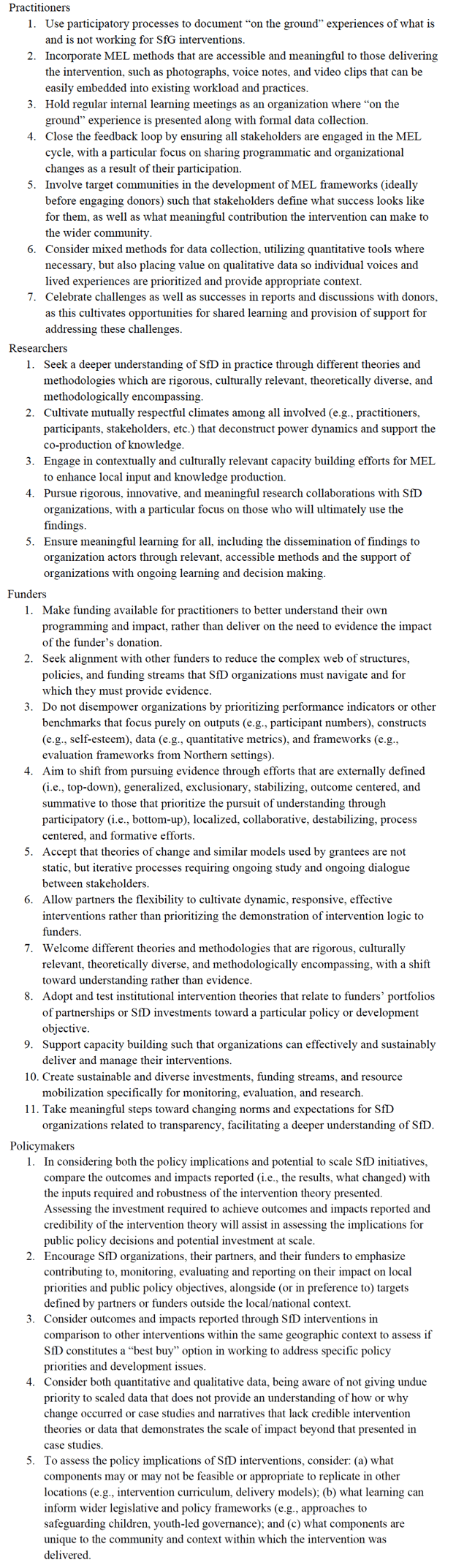

Table 1 – Actionable takeaways for monitoring, evaluation, and research in sport for development

CONCLUSION

Along with knowledge accumulated from the SfD literature and two recent systematic reviews (Darnell et al., 2019; Whitley, Massey, Camiré, Blom et al., 2019; Whitley, Massey, Camiré, Boutet, & Borbee, 2019), our writing team’s diverse experiences and expertise informed our discussion above, which we recognize has its limitations. A set of actionable takeaways are outlined in Table 1, which were culled from the dialogue shared above.

Ultimately, we must keep in mind the questions that Jeanes and Lindsey (2014) challenged us with: “what evidence is required, for whom, to serve what purpose, and how this evidence is collected in practice?” (p. 212) This is complicated by the diversity of funders and bodies to which organizations are responsible (including their own communities and different levels of government), often resulting in a range of reporting expectations to the community, to public authorities, to funders, and to other stakeholders. This can add to the monitoring, evaluation, and research expectations, requiring compliance with various external frameworks and standards and the collection of varying types of data (e.g., quantitative and qualitative evidence, anecdotal evidence and narratives, visual evidence). Additionally, recent efforts by the international community (e.g., MINEPS, 2017; UN GA Resolution 73/24, 2018; Commonwealth Sports Ministers, 2018) call for greater alignment through common standards and methods. The key is ensuring that the needs of the organizations and local communities are kept at the forefront—along with the needs of the broader SfD community. SfD organizations have already shown resistance to mission drift in order to secure funding (Giulianotti, 2011), but this is a constant negotiation amid the power and associated resources within the SfD field (Nicholls et al., 2011; Straume, 2019).

Ultimately, we believe all actors must continue to support the pursuit of participatory, rigorous, process-centered (but outcome-aware) monitoring, evaluation, and research that aims to enhance our understanding of SfD. This monitoring, evaluation, and research should improve both policy and intervention design and implementation while also defining and testing more realistic, contextually relevant, culturally aware outcomes and impacts.

FUNDING

The systematic reviews were funded by the Laureus Sport for Good Foundation, the Commonwealth Secretariat, and the Laureus Sport for Good Foundation USA. The focus on youth-focused SfD interventions within specific geographic locations for the systematic reviews was partially driven by these funders. The funders did not have any further role in the design of the systematic reviews nor in the systematic review procedures. However, representatives of two of the funders are coauthors on this paper and so assisted in the interpretation and writing for this specific paper.

ACKNOWLEDGEMENTS

We thank the organizations and scholars who assisted in the identification and procurement of published and unpublished documents.

REFERENCES

Coalter, F. (2013). Sport for development: What game are we playing? Routledge.

Coalter, F., & Taylor, J. (2010). Sport-for-development impact study. Comic Relief, UK.

Collison, H., & Marchesseault, D. (2018). Finding the missing voices of Sport for Development and Peace (SDP): Using a “Participatory Social Interaction Research” methodology and anthropological perspectives within African developing countries. Sport in Society, 21(2), 226-242.

Commonwealth Secretariat (2019a). Measuring the contribution of sport, physical education and physical activity to the Sustainable Development Goals: Toolkit and model indicators v3.1. https://thecommonwealth.org/sites/default/files/inline/Sport-SDGs-Indicator-Framework.pdf

Commonwealth Secretariat (2019b). International framework on measuring sport’s contribution gains traction. https://thecommonwealth.org/media/news/international-framework-measuring-sport%E2%80%99s-contribution-gains-traction

Commonwealth Sports Ministers. (2018). 9th Commonwealth Sports Ministers meeting communique. http://thecommonwealth.org/sites/default/files/inline/9CSMM%20%2818%29%20Communiqu%C3%A9.pdf

Corporate Citizenship. (2018). London Benchmarking Group guidance manual. http://www.lbg-online.net/wp-content/uploads/2018/10/LBG-Public-Guidance-Manual_2018.pdf

Darnell, S. C., Chawansky, M., Marchesseault, D., Holmes, M., & Hayhurst, L. (2018). The state of play: Critical sociological insights into recent “Sport for Development and Peace” research. International Review for the Sociology of Sport, 53(2), 133-151.

Darnell, S. C., Whitley, M. A., Camiré, M., Massey, W. V., Blom, L. C., Chawansky, M., Forde, S., & Hayden, L. (2019). Systematic reviews of sport for development literature: Managerial and policy implications. Journal of Global Sport Management. https://doi.org/10.1080/24704067.2019.1671776

Gardam, K., Giles, A.R., & Hayhurst, L.M.C. (2017). Sport for development for Aboriginal youth in Canada: A scoping review. Journal of Sport for Development, 5(6), 30-40.

Giulianotti, R. (2011). Sport, transnational peacemaking, and global civil society: Exploring the reflective discourses of “sport, development, and peace” project officials. Journal of Sport and Social Issues, 35(1), 50-71.

Giulianotti, R., Hognestad, H., & Spaaij, R. (2016). Sport for development and peace: Power, politics, and patronage. Journal of Global Sport Management, 1(3-4), 129-141.

Global Obesity Prevention Center. (2019). “Modeling the economic and health impact of increasing children’s physical activity in the United States”: Social media toolkit. http://www.globalobesity.org/about-the-gopc/our-publications/physical-activity-model-social-media-toolkit.html

Harris, K., & Adams, A. (2016). Power and discourse in the politics of evidence in sport for development. Sport Management Review, 19(2), 97-106.

Haudenhuyse, R., Theeboom, M., & Nols, Z. (2013). Sports-based interventions for socially vulnerable youth: Towards well-defined interventions with easy-to-follow outcomes? International Review for the Sociology of Sport, 48(4), 471-484.

Hayhurst, L.M.C. (2016). Sport for development and peace: A call for transnational, multi-sited, postcolonial feminist research. Qualitative Research in Sport, Exercise, and Health, 8(5), 424-443.

Henne, K. (2017). Indicator culture in sport for development and peace: A transnational analysis of governance networks. Third World Thematics, 2(1), 69-86.

International Aid Transparency Initiative. (2018). About IATI. https://iatistandard.org/en/about/

International Platform on Sport and Development. (2018). What is monitoring and evaluation (M&E)? https://www.sportanddev.org/en/toolkit/monitoring-and-evaluation/what-monitoring-and-evaluation-me

Jaitman, L., & Scartascini, C. (2017). Sports for Development. Inter-American Development Bank. https://publications.iadb.org/en/publication/17342/sports-development

Jamaica Information Service. (2019). Government to strengthen mechanisms to evaluate contribution of sport. https://jis.gov.jm/government-to-strengthen-mechanisms-to-evaluate-contribution-of-sport/

James, C. (2011). Theory of change review: A report commissioned by Comic Relief. Comic Relief. http://www.actknowledge.org/resources/documents/James_ToC.pdf

Jeanes, R., & Lindsey, I. (2014). Where’s the “evidence”? Reflecting on monitoring and evaluation within sport-for-development. In K. Young & C. Okada (Eds.), Sport, social development and peace (Research in the sociology of sport, Vol. 8) (pp. 197-218). Emerald Group.

Kaufman, Z., Rosenbauer, B. P., & Moore, G. (2013). Lessons learned from monitoring and evaluating sport-for-development programmes in the Caribbean. In N. Schulenkorf & D. Adair (Eds.), Global Sport for Development (pp. 173-193). Palgrave Macmillan.

Kay, T. (2012). Accounting for legacy: Monitoring and evaluation in sport in development relationships. Sport in Society, 16(6), 888-904.

Kay, T., Mansfield, L., & Jeanes, R. (2016). Researching with Go Sisters, Zambia: Reciprocal learning in sport for development. In L. Hayhurst, T. Kay, & M. Chawansky (Eds.), Beyond sport for development and peace: Transnational perspectives on theory, policy and practice (pp. 214-229). Routledge.

Keane, L., Hoare, E., Richards, J., Bauman, A., & Bellew, W. (2019) . Methods for quantifying the social and economic value of sport and active recreation: A critical review. Sport in Society, 22(12), 2203-2223. doi:10.1080/17430437.2019.1567497

Keyte, T., Whitley, M. A., Sanders, B. F., Rolfe, L., Mattila, M., Pavlick, R. & Ridout, H. B. (2018). A winning team: Scholar-practitioner partnerships in sport for development. In D. Van Rheenen & J. M. DeOrnellas (Eds.), Envisioning scholar-practitioner collaborations: Communities of practice in education and sport (pp. 3-18). Information Age Publishing.

Langer, L. (2015) Sport for development— a systematic map of evidence from Africa. South African Review of Sociology, 46(1): 66-86.

Levermore, R. (2011). Evaluating sport-for-development: Approaches and critical issues. Progress in Development Studies, 11(4), 339-353.

Levermore, R., & Beacom A. (2014). Reassessing sport-for-development: Moving beyond “mapping the territory.” International Journal of Sport Policy and Politics, 4(1), 125-137.

Lindsey, I. (2013). Community collaboration in development work with young people: Perspectives from Zambian communities. Development in Practice, 23(4), 481-495.

Lindsey, I. (2017). Governance in sport-for-development: Problems and possibilities of (not) learning from international development. International Review for the Sociology of Sport, 52(7), 801-818.

Lindsey, I., Zakariah, A. B. T., Owusu-Ansah, E., Ndee, H., & Jeanes, R. (2015). Researching “sustainable development in African sport”: A case study of a North-South academic collaboration. In L. M. C. Hayhurst, T. Kay, & M. Chawansky (Eds.), Beyond sport for development and peace: Transnational perspectives on theory, policy and practice (pp. 196-209). Routledge.

Massey, W. V., & Whitley, M. A. (2019). SDP and research methods. In S. Darnell, R. Giulianotti, D. Howe, & H. Collison (Eds.), Routledge Handbook on Sport for Development (pp. 175-184). Routledge.

Ministers and Senior Officials Responsible for Physical Education and Sport. (2017, July 15). Kazan Action Plan. Report from the Sixth International Conference of Ministers and Senior Officials Responsible for Physical Education and Sport (MINEPS VI). Kazan, Russia: United Nations Educational, Scientific and Cultural Organization.

Nicholls, S., Giles, A. R., & Sethna, C. (2011). Perpetuating the “lack of evidence” discourse in sport for development: Privileged voices, unheard stories and subjugated knowledge. International Review for the Sociology of Sport, 46(3), 249-264.

Oxfam GB (2020). Oxfam GB evaluation guidelines. https://www.alnap.org/help-library/oxfam-gb-evaluation-guidelines

Patton, M.Q. (2008). Utilization-focused evaluation (4th ed.). Sage.

Philadelphia Youth Sports Collaborative. (2020). Building a movement. https://pysc.org/our-work/building-movement

Poister, T. H. (2015). Performance monitoring. In K. E. Newcomer, H. P. Hatry, & J. S. Wholey (Eds.), Handbook of Practical Program Evaluation (4th ed., pp. 108-136). Jossey Bass.

Reverdito, R. S., Galatti, L. R., de Lima, L. A., Nicolau, P. S., Scaglia, A. J., & Paes, R. R. (2016). The “Programa Segundo Tempo” in Brazilian municipalities: Outcome indicators in macrosystem. Journal of Physical Education, 27(e2754), 2448-2455.

Rogers, P. J. (2008). Using programme theory to evaluate complicated and complex aspects of interventions. Evaluation, 14(1), 29-48.

Sherry, E., & Schulenkorf, N. (2016). League Bilong Laif: Rugby, education, and sport-for-development partnerships in Papua New Guinea. Sport, Education and Society, 21(4), 513-530.

Sherry, E., Schulenkorf, N., Seal, E., Nocholson, M., & Hoye, R. (2017). Sport-for-development in the South Pacific region: Macro-, meso-, and micro-perspectives. Sociology of Sport Journal, 34(4), 303-316.

Sport England. (2020). Working with Partners. https://www.sportengland.org/active-nation/working-with-partners/

Sport & Society Research Unit. (2020). Monitoring and evaluation manual for sport-for-employability programmes. Monitor. https://www.sport4employability.eu/

Straume, S. (2019). SDP structures, policies and funding streams. In S. Darnell, R. Giulianotti, D. Howe, & H. Collison (Eds.), Routledge Handbook on Sport for Development (pp. 46-58). Routledge.

streetfootballworld. (2020a). Common Goal. https://www.common-goal.org/streetfootballworld. (2020b). What we do. https://www.streetfootballworld.org/what-we-do/events

Sugden, J. (2010). Critical left-realism and sport interventions in divided societies. International Review for the Sociology of Sport, 45(3), 258-272.

Svensson, P. G., & Hambrick, M. E. (2019). Exploring how external stakeholders shape social innovation in sport for development and peace. Sport Management Review, 22(4), 540-552. https://doi.org/10.1016/j.smr.2018.07.002

United Nations Development Programme. (2017). Movement is life: Sports And physical activities for everyone national human development report 2017. http://hdr.undp.org/sites/default/files/reports/2798/nhdr_2017_brazil.pdf

United Nations General Assembly Resolution 73/24 (2018, December 3). Sport as an enabler of sustainable development, A/73/24. https://undocs.org/A/73/325

Welty Peachey, J., Musser, A., Shin, N. R., & Cohen, A. (2017). Interrogating the motivations of sport for development and peace practitioners. International Review for the Sociology of Sport, 53(7), 767-787. https://doi.org/10.1177/1012690216686856

Whitley, M. A., Farrell, K., Wolff, E., & Hillyer, S. (2019). Sport for development and peace: Surveying actors the field. Journal of Sport for Development, 7(12), 1-15.

Whitley, M. A., Massey, W. V., Camiré, M., Blom, L. C., Chawansky, M., Forde, S., Boutet, M., Borbee, A., & Darnell, S. C. (2019). A systematic review of sport for youth development interventions across six global cities. Sport Management Review, 22(2), 181-193.

Whitley, M. A., Massey, W. V., Camiré, M., Boutet, M., & Borbee, A. (2019). Sport-based youth development interventions in the United States: A systematic review. BMC Public Health, 19(89). https://doi.org/10.1186/s12889-019-6387-z

Whitley, M. A., McGarry, J., Martinek, T., Mercier, K., & Quinlan, M. (2017). Educating future leaders of the sport-based youth development field. Journal of Physical Education, Recreation & Dance, 88(8), 15-20.